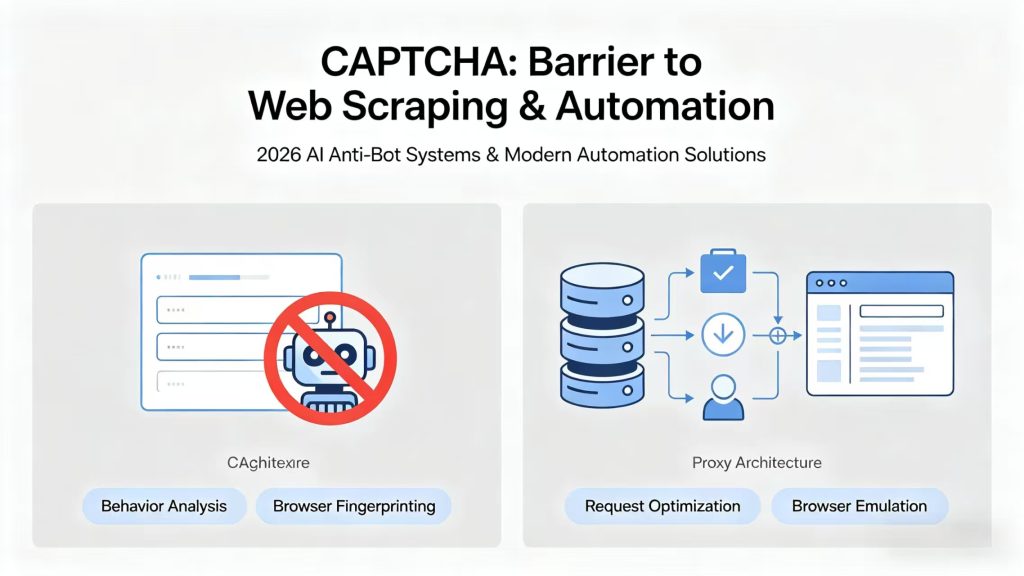

CAPTCHAs have become one of the most persistent barriers in modern web scraping, automation, and large-scale data collection. In 2026, with AI-driven anti-bot systems, behavioral analysis, and advanced fingerprinting technologies, bypassing CAPTCHA is no longer achievable through simple scripts or isolated tools.

Instead, modern automation requires a structured architecture combining proxy infrastructure, request behavior optimization, and browser-level simulation.

For developers, data engineers, and growth teams working on web scraping, SEO monitoring, price intelligence, ad verification, and market research, understanding CAPTCHA mechanisms is essential for building stable and scalable data pipelines.

This guide breaks down six practical, production-tested methods used in real-world scraping systems to reduce CAPTCHA frequency and improve automation success rates.

Table of Contents

Why CAPTCHA Systems Are More Advanced in 2026

Modern CAPTCHA systems no longer rely on simple challenge-response logic. Instead, they use AI-driven behavioral models that continuously evaluate traffic patterns in real time.

Rather than blocking based on a single factor, systems now combine multiple signals to build a risk score for each request.

Key detection signals include:

- IP reputation and ASN classification

- Detection of residential vs datacenter traffic patterns

- Browser and device fingerprint consistency

- Mouse movement, scrolling, and interaction timing

- Request frequency and session behavior anomalies

- TLS and network-level fingerprint analysis

Because of these layered detection mechanisms, traditional scraping setups using static datacenter proxies are no longer reliable at scale.

This is why proxy infrastructure has become a core component of modern data extraction systems.

Method 1: Residential Proxies as the Foundation

Residential proxies remain the most effective way to reduce CAPTCHA triggers because they route traffic through real ISP-assigned IP addresses.

Unlike datacenter IPs, residential proxies originate from real consumer devices, making traffic appear significantly more natural to anti-bot systems.

Why residential proxies work:

- IPs come from real household networks

- Lower probability of detection by AI-based systems

- Higher success rates on high-security platforms

- Better compatibility with search engines and e-commerce sites

- Reduced risk of IP-level blocking

In most scraping architectures, residential proxies form the first and most important layer of defense against CAPTCHA systems.

Method 2: Intelligent IP Rotation Strategy

One of the most common reasons CAPTCHA is triggered is repetitive traffic patterns from the same IP or session.

A well-designed rotation strategy distributes requests across multiple IPs while maintaining stability where needed.

Best practices:

- Rotate IPs per request or per session depending on target sensitivity

- Use geo-targeted IP allocation for localized scraping tasks

- Avoid predictable request intervals

- Use sticky sessions for login or authentication workflows

- Prevent reuse of identical IP + fingerprint combinations

In production environments, IP rotation is often automated through proxy management systems to ensure consistency and scalability.

Method 3: Browser Fingerprint Control

Modern anti-bot systems rely heavily on browser fingerprinting, which analyzes device-level characteristics beyond IP addresses.

Even with proxies, inconsistent fingerprints can still trigger CAPTCHA challenges.

Key optimization techniques:

- Rotate User-Agent strings dynamically

- Use stealth frameworks like Playwright Stealth or Puppeteer Stealth

- Hide automation indicators such as

navigator.webdriver - Align timezone, language, screen resolution, and system settings

- Normalize WebGL and canvas fingerprint outputs when necessary

When combined with residential proxies, fingerprint control significantly improves request legitimacy.

Method 4: Human-Like Interaction Simulation

CAPTCHA systems increasingly rely on behavioral analysis rather than static rules. They evaluate how a user interacts with a page over time.

To reduce detection risk, automation systems must simulate natural browsing behavior.

Effective simulation methods:

- Randomized delays between actions instead of fixed intervals

- Realistic scrolling patterns and viewport interactions

- Mouse movement simulation with non-linear paths

- Page reading delays on content-heavy sections

- Randomized navigation paths instead of sequential crawling

This behavioral layer is especially important for SERP scraping, e-commerce monitoring, and social media data collection.

Method 5: ISP Proxies for Stable Sessions

ISP proxies (also known as static residential proxies) offer a balance between speed, stability, and trustworthiness.

They are assigned by real ISPs but behave like stable datacenter connections, making them ideal for long-running sessions.

Common use cases:

- E-commerce automation workflows

- Account-based scraping and login systems

- API-heavy data extraction tasks

- Continuous monitoring systems requiring IP stability

Unlike rotating residential proxies, ISP proxies are designed for consistency rather than high-frequency rotation.

Method 6: CAPTCHA Solving Services (Fallback Layer)

In some environments, CAPTCHA cannot be fully avoided due to strict anti-bot protection systems.

In these cases, CAPTCHA solving services can be used as a fallback layer rather than a primary solution.

Common approaches:

- AI-based image recognition systems

- Human-powered solving networks

- API-based CAPTCHA resolution services

- Hybrid systems combining AI and human validation

However, relying solely on CAPTCHA solvers is inefficient for large-scale operations and should always be combined with proxy and behavior optimization strategies.

Best Practice: Multi-Layer Anti-CAPTCHA Architecture

In 2026, effective data extraction systems are built using layered architectures rather than single-method solutions.

A production-grade setup typically includes:

- Residential proxy infrastructure

- Intelligent IP rotation system

- Stealth browser automation

- Human-like behavior simulation

- Optional CAPTCHA solving fallback layer

This multi-layer approach significantly reduces detection rates and improves long-term scraping stability.

Real-World Applications

CAPTCHA mitigation strategies are widely used across multiple industries, including:

- E-commerce price intelligence (Amazon, Shopify, eBay)

- Search engine result tracking (SERP monitoring)

- Social media analytics and audience research

- Advertising verification and fraud detection

- Competitive market intelligence systems

Infrastructure Insight

Proxy infrastructure plays a critical role in modern CAPTCHA reduction strategies.

High-quality residential proxy networks provide the foundation for stable and scalable automation systems, enabling consistent access across high-security platforms.

Final Thoughts

Bypassing CAPTCHA in 2026 is no longer a single-method problem. It is an infrastructure-level challenge that requires combining proxy networks, browser fingerprint control, and intelligent request behavior design.

The most successful systems are those that replicate real user behavior at scale while maintaining flexibility and stability across different platforms.

As anti-bot systems continue to evolve, building a robust proxy-driven architecture remains essential for any serious web scraping or automation project.